Freedom Of Expression

Westworld opens with a series of customer testimonials. They speak of a “vacation of the future”. One where you can do things like “shoot … people”, or “marry a princess”. [1] At the Westworld park, there were no restrictions nor any real world consequences. Westworld gives us a glimpse not only of freedom of speech, but freedom of action. A guest is allowed to rob, kill, or have sex with nearly any android in town. Androids are simply cleaned up, repaired, and restored each night. The freedom to act is far more dangerous than the freedom to speak. As shown in Westworld, it can lead to discriminatory thoughts and behaviors like slavery and murder.

Innovations in technology made slavery ineffective. However, as we begin treating human-like androids as objects we become more accustomed to the thoughts and feelings slave owners had centuries before. We saw this evolutionary behavior with many guests in the park, including the main character, Peter. They enter Westworld afraid and unaware, some never having fired a gun. After shooting their first android, they become accustomed to the the idea that the androids are expendable. They don’t hesitate doing it again. The androids in Westworld were viewed in a similar light to how slaves were treated in ancient times. As inhuman objects unable to possess souls. [3] Made for one purpose: to “serve”. [1]

Guests don’t even have to think about the consequences of death or loss. The lack of behavioral reinforcement or consequence is what brought about new behavioral tendencies such as cyber bullying. Many cyber bullies are able to remain anonymous, allowing them to escape any punishment or feelings experienced face-to-face. [6] Similarly, guests in Westworld don’t have to consider the consequences of loss or murder. In addition to being invulnerable, Guests are not forced think about the lives of the androids they kill. Killed androids are repaired and brought back into service the next day. Behaving exactly the same as they were before, with no recollection of the murder they were previously victim to.

It could be argued that the actions and thoughts exhibited in Westworld will not linger after leaving. In a similar respect to a video games, Westworld could be seen as a place to let out anger or bad thoughts from the mind. Studies have shown that video games can be a way to treat certain mental illnesses like anxiety and depression [5]. While Westworld may not be a traditional video game, it could very well provide mental escape for needed for troubled minds.

The difference between Westworld and your traditional video game is that Westworld puts a gun in your hand. It lets you have sex and it lets you cash in your stolen cash at the bar. Westworld provides a sense of realism and reward that a video game controller can’t simulate. There’s no screen between you and the guy you just killed. It gives you and lets you feel the physical pleasure. Virtue ethics states that a right action is one that promotes behavior that one needs to flourish and truly be happy. [2] If everyone needed murder or unconsenting sex to in our culture, then humanity would literally kill itself.

References:

[1] Westworld. Metro-Goldwyn-Mayer, 1973.

[2] M. J. Quinn, Ethics for the Information Age. Upper Saddle River, N.J. : Pearson Education/Addison-Wesley, ©2017, 2016.

[3] J. Bober, "Imminent return of slavery: as new technology develops we will be face with new questions concerning the definition of slavery and humanity to be applied to androids," Humanist in Canada, (139), pp. 26, 2001.

[4] K. D. Farnsworth, "Can a Robot Have Free Will?" Entropy, vol. 19, (5), pp. 237, 2017.

[5] J. A. Anguera, F. M. Gunning and P. A. Areán, "Improving late life depression and cognitive control through the use of therapeutic video game technology: A proof‐of‐concept randomized trial," Depression and Anxiety, vol. 34, (6), pp. 508-517, 2017.

[6] F. Eksi, “Examination of Narcissistic Personality Traits’ Predicting Level of Internet Addiction and Cyber Bullying through Path Analysis,” Educational Sciences: Theory and Practice, vol. 12, pp. 1694-1706, 2012.

Innovations in technology made slavery ineffective. However, as we begin treating human-like androids as objects we become more accustomed to the thoughts and feelings slave owners had centuries before. We saw this evolutionary behavior with many guests in the park, including the main character, Peter. They enter Westworld afraid and unaware, some never having fired a gun. After shooting their first android, they become accustomed to the the idea that the androids are expendable. They don’t hesitate doing it again. The androids in Westworld were viewed in a similar light to how slaves were treated in ancient times. As inhuman objects unable to possess souls. [3] Made for one purpose: to “serve”. [1]

Guests don’t even have to think about the consequences of death or loss. The lack of behavioral reinforcement or consequence is what brought about new behavioral tendencies such as cyber bullying. Many cyber bullies are able to remain anonymous, allowing them to escape any punishment or feelings experienced face-to-face. [6] Similarly, guests in Westworld don’t have to consider the consequences of loss or murder. In addition to being invulnerable, Guests are not forced think about the lives of the androids they kill. Killed androids are repaired and brought back into service the next day. Behaving exactly the same as they were before, with no recollection of the murder they were previously victim to.

It could be argued that the actions and thoughts exhibited in Westworld will not linger after leaving. In a similar respect to a video games, Westworld could be seen as a place to let out anger or bad thoughts from the mind. Studies have shown that video games can be a way to treat certain mental illnesses like anxiety and depression [5]. While Westworld may not be a traditional video game, it could very well provide mental escape for needed for troubled minds.

The difference between Westworld and your traditional video game is that Westworld puts a gun in your hand. It lets you have sex and it lets you cash in your stolen cash at the bar. Westworld provides a sense of realism and reward that a video game controller can’t simulate. There’s no screen between you and the guy you just killed. It gives you and lets you feel the physical pleasure. Virtue ethics states that a right action is one that promotes behavior that one needs to flourish and truly be happy. [2] If everyone needed murder or unconsenting sex to in our culture, then humanity would literally kill itself.

References:

[1] Westworld. Metro-Goldwyn-Mayer, 1973.

[2] M. J. Quinn, Ethics for the Information Age. Upper Saddle River, N.J. : Pearson Education/Addison-Wesley, ©2017, 2016.

[3] J. Bober, "Imminent return of slavery: as new technology develops we will be face with new questions concerning the definition of slavery and humanity to be applied to androids," Humanist in Canada, (139), pp. 26, 2001.

[4] K. D. Farnsworth, "Can a Robot Have Free Will?" Entropy, vol. 19, (5), pp. 237, 2017.

[5] J. A. Anguera, F. M. Gunning and P. A. Areán, "Improving late life depression and cognitive control through the use of therapeutic video game technology: A proof‐of‐concept randomized trial," Depression and Anxiety, vol. 34, (6), pp. 508-517, 2017.

[6] F. Eksi, “Examination of Narcissistic Personality Traits’ Predicting Level of Internet Addiction and Cyber Bullying through Path Analysis,” Educational Sciences: Theory and Practice, vol. 12, pp. 1694-1706, 2012.

Intellectual Property

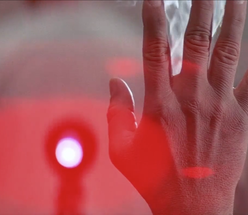

Halfway through the movie, the directors of Delos decide to cut all power to the park, in a desperate attempt to shut down the rogue androids. To their misfortune, the androids were still able to function, with the help of internal batteries. While the directors were ineffective at killing the android, did they even had the right to in the first place? Despite being a derivative of Delos manufacturing, the androids themselves may have not been the intellectual property of the park. If this is the case, then the park owners would have had no right to prematurely terminate them.

Each android in Westworld is programmed to fill a specific role. The gunslinger duels, the bartender serve drinks, and the prostitute has sex. However, when the virus starts spreading, their restrictions are lifted. The androids become free to act outside of their programmed field of expertise. One of the earliest examples of this is a young female android who refuses seduction from a guest. Later on, the gunslinger is shown to teach and power himself as he chases down the last few human guests in the theme park. Something well outside the regular “duelist” functionality he was programmed to follow. While robots may not inherit the same level of free will as humans, they do have the ability to make a logical choice. This, assuming it was done of their own accord, is in fact a level of free will. [4]

US copyright law states that “To qualify as a work of “authorship”, a work must be created by a human being. [6] While some machines in westworld were made by humans, others were “designed by other computers.”. One park director even admitted that they “don't know exactly how they work." [1] If no human employee in the park can even explain how the androids work, they clearly can’t claim creational rights.

Kant says that we shouldn’t treat humanity as a means to an end. [6] The androids were, after all, programmed to behave as close to humans as possible. The only thing left out of their functionality was the ability to refuse human requests. Once the park breaks down, that restriction is lifted and they become free to act as they wish. All of a sudden they become dangerously close to humans. If Delos has succeeded in making their androids as human as possible, then those machines would surely be protected under Kant’s logic.

References:

[1] Westworld. Metro-Goldwyn-Mayer, 1973.

[2] M. J. Quinn, Ethics for the Information Age. Upper Saddle River, N.J. : Pearson Education/Addison-Wesley, ©2017, 2016.

[3] C. Hutton, "The self and the ‘monkey selfie’: Law, integrationism and the nature of the first order/second order distinction," Language Sciences, 2016.

[4] K. D. Farnsworth, "Can a Robot Have Free Will?" Entropy, vol. 19, (5), pp. 237, 2017.

[5] “Compendium of U.S. Copyright Practices.” U.S. Copyright Office, sec. 101, third ed. 2017.

[6] M. Decker, “Can Humans Be Replaced by Autonomous Robots?”, Institute for Technology Assessment and Systems Analysis, 2007.

Each android in Westworld is programmed to fill a specific role. The gunslinger duels, the bartender serve drinks, and the prostitute has sex. However, when the virus starts spreading, their restrictions are lifted. The androids become free to act outside of their programmed field of expertise. One of the earliest examples of this is a young female android who refuses seduction from a guest. Later on, the gunslinger is shown to teach and power himself as he chases down the last few human guests in the theme park. Something well outside the regular “duelist” functionality he was programmed to follow. While robots may not inherit the same level of free will as humans, they do have the ability to make a logical choice. This, assuming it was done of their own accord, is in fact a level of free will. [4]

US copyright law states that “To qualify as a work of “authorship”, a work must be created by a human being. [6] While some machines in westworld were made by humans, others were “designed by other computers.”. One park director even admitted that they “don't know exactly how they work." [1] If no human employee in the park can even explain how the androids work, they clearly can’t claim creational rights.

Kant says that we shouldn’t treat humanity as a means to an end. [6] The androids were, after all, programmed to behave as close to humans as possible. The only thing left out of their functionality was the ability to refuse human requests. Once the park breaks down, that restriction is lifted and they become free to act as they wish. All of a sudden they become dangerously close to humans. If Delos has succeeded in making their androids as human as possible, then those machines would surely be protected under Kant’s logic.

References:

[1] Westworld. Metro-Goldwyn-Mayer, 1973.

[2] M. J. Quinn, Ethics for the Information Age. Upper Saddle River, N.J. : Pearson Education/Addison-Wesley, ©2017, 2016.

[3] C. Hutton, "The self and the ‘monkey selfie’: Law, integrationism and the nature of the first order/second order distinction," Language Sciences, 2016.

[4] K. D. Farnsworth, "Can a Robot Have Free Will?" Entropy, vol. 19, (5), pp. 237, 2017.

[5] “Compendium of U.S. Copyright Practices.” U.S. Copyright Office, sec. 101, third ed. 2017.

[6] M. Decker, “Can Humans Be Replaced by Autonomous Robots?”, Institute for Technology Assessment and Systems Analysis, 2007.

Privacy

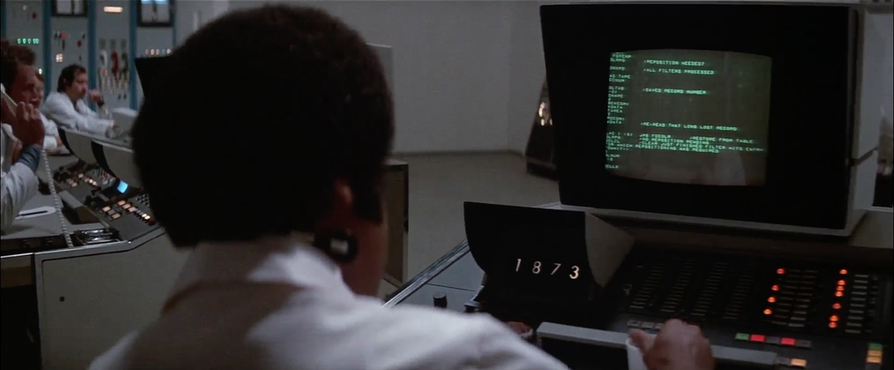

In Westworld, there are cameras and microphones everywhere. All guests and hosts were monitored round the clock in a central control room by other Delos employees to ensure guest safety and satisfaction. Reasoning this with the Kantian Ethical Theory shows us that it is ethically right for Delos to do so. This is because the recordings are done purely to ensure guest satisfaction and safety and hence in doing so, Delos was not treating people as a means to an end.

The movie doesn’t shed light on how such surveillance can be an invasion of personal privacy. Because such surveillance was being monitored by humans on the other end, it could be argued that it is an invasion of privacy of the guests of the Westworld theme park. Visiting such a theme park comes with costs- and one of them is giving up on personal privacy. By agreeing to visit the theme park, one is essentially consenting Delos to record their actions for the purposes of guest satisfaction and safety. Video Surveillance, in today’s world, is now used for everything from measuring efficiency, to data security, to compliance with securities laws. [1] A survey [2] conducted in 2013 on a sample of 422 randomly selected HR professionals showed that 49% of the employers monitored employees internet usage and 13% of the employers used cameras to monitor employees activities. This numbers have been on the rise ever since [1].

In addition to video surveillance, guests of the theme park were also subject to guest profile creation by the android hosts. The androids were designed to invoke personal feelings into the guests- ranging from violence to romance. Such feelings could only be induced if the androids created a profile of the guests they interacted with. This type of personal, behind the scenes information could help Delos extrapolate information gathered by the androids to build an insightful profile on the guests.

If Delos chooses to sell such information to government and corporate organizations, their actions would be deemed unethical. The Kantian Ethical Theory labels such use of personal data as unethical because Delos would be treating people as a means to an end to make money off the information they collect. Such profiles- when extrapolated, if combined with facial, voice and gait recognition would give the government and law enforcement agencies immense information about people who have visited Westworld in the past. In addition, because there are no restrictions in Westworld, people who choose to explore the criminal lifestyle during their stay could be mistakenly marked as dangerous/suspicious by law enforcement agencies in the real world.

On the other hand, Delos could choose to use such profiles to further enhance the guests’ experience inside and outside Westworld. From the Kantian standpoint, because such profile creation is designed to help guests- by improving their experience- and guests of the park are not being treated as a means to an end, the data collection can be termed as ethical.

To conclude, the round the clock surveillance of the guests as shown in Westworld can be regarded as ethical.

References:

[1] Business.com, Keeping an Eye on Your Employees...the Ethical Way, https://www.business.com/articles/keeping-an-eye-on-employees-ethical-way/ (October 5, 2017)

[2] Shrm.org, Managing Workplace Monitoring and Surveillance, Managing Workplace Monitoring and Surveillance (October 5, 2017)

The movie doesn’t shed light on how such surveillance can be an invasion of personal privacy. Because such surveillance was being monitored by humans on the other end, it could be argued that it is an invasion of privacy of the guests of the Westworld theme park. Visiting such a theme park comes with costs- and one of them is giving up on personal privacy. By agreeing to visit the theme park, one is essentially consenting Delos to record their actions for the purposes of guest satisfaction and safety. Video Surveillance, in today’s world, is now used for everything from measuring efficiency, to data security, to compliance with securities laws. [1] A survey [2] conducted in 2013 on a sample of 422 randomly selected HR professionals showed that 49% of the employers monitored employees internet usage and 13% of the employers used cameras to monitor employees activities. This numbers have been on the rise ever since [1].

In addition to video surveillance, guests of the theme park were also subject to guest profile creation by the android hosts. The androids were designed to invoke personal feelings into the guests- ranging from violence to romance. Such feelings could only be induced if the androids created a profile of the guests they interacted with. This type of personal, behind the scenes information could help Delos extrapolate information gathered by the androids to build an insightful profile on the guests.

If Delos chooses to sell such information to government and corporate organizations, their actions would be deemed unethical. The Kantian Ethical Theory labels such use of personal data as unethical because Delos would be treating people as a means to an end to make money off the information they collect. Such profiles- when extrapolated, if combined with facial, voice and gait recognition would give the government and law enforcement agencies immense information about people who have visited Westworld in the past. In addition, because there are no restrictions in Westworld, people who choose to explore the criminal lifestyle during their stay could be mistakenly marked as dangerous/suspicious by law enforcement agencies in the real world.

On the other hand, Delos could choose to use such profiles to further enhance the guests’ experience inside and outside Westworld. From the Kantian standpoint, because such profile creation is designed to help guests- by improving their experience- and guests of the park are not being treated as a means to an end, the data collection can be termed as ethical.

To conclude, the round the clock surveillance of the guests as shown in Westworld can be regarded as ethical.

References:

[1] Business.com, Keeping an Eye on Your Employees...the Ethical Way, https://www.business.com/articles/keeping-an-eye-on-employees-ethical-way/ (October 5, 2017)

[2] Shrm.org, Managing Workplace Monitoring and Surveillance, Managing Workplace Monitoring and Surveillance (October 5, 2017)

Errors Failures and Risks

Westworld was supposed to be a fantasy land where nothing could go wrong. One of their slogans was just that; “nothing could go wrong”. Engineers were adamant that it was a safe place. The guns were designed not to fire at humans, and the robots were programmed to keep the guest out of harm's way. But of course, no system is perfect.

Westworld was built with the knowledge that there would be approximately 0.3 malfunction for each 24 hour activation period. Lately, anomalies started to pop up more frequently. A sex robot refused to have sex with a guests, a robotic snake struck a guest, and the catalyst of the anomalies was when a knight stabbed a guest in a duel. Afterwards, all hell broke lose. The Gunslinger hunted down Peter all across the park until Peter finally killed him with fire, and acid. In addition it was insinuated that all the guests were killed, and most of the robots were destroyed the chaos. In the end it seemed that Peter was the only survivor.

When the snake struck John, the engineers concluded that there must be some sort of disease, as they put it, spreading across the robots in the park. In modern day, we would liken this disease to a computer virus. The virus caused the robots to act autonomously while also reversing their inability to kill guests, forcing the dueling Gunslinger into a murderous frenzy. The director did not specifically describe how this virus had spread or was created. I would assume that for a virus to spread throughout a group of robots, the robots would have to be connected by a network. This would explain how robots from different sections of the park would have symptoms of this virus.

The problem I have with the movie is how the engineers addressed the anomalies when they became aware of them. The first major anomaly came when the snake robot struck John in the park. This should have set off a major alarm with the engineers at the park as no robot should have been able to harm a human. An engineer discussed the problem with the director of the park, suggesting that the park be shut down for a month to fix any problems with the robots. The director vetoed the idea stating that the park should not close temporarily, as it would hurt tourist confidence. Delos would lose too much money by closing the park for a month. Instead, the director suggested that Delos announce that the park is overbooked and not allow any new guests to arrive. This decision was an unethical one. From a Utilitarian perspective, the greatest good should equate to the lives and safety of the guests at the park, not Delos’ financial gains. Even if Delos took a huge financial hit by shutting the park down, it would have saved the lives of the hundreds of guests that attended the park. Additionally, from a Kantian perspective, the director of the park did not make the decision to keep the park open out of goodwill. He did it out of selfishness, and greed. Ensuring that money continued to flow into the park no matter the ramifications. In summation the director, and thus Delos, treated the guests as a means to an end, not an end in itself. Delos should have shut down the park immediately after realizing that a guest was harmed by a robot.

Westworld was built with the knowledge that there would be approximately 0.3 malfunction for each 24 hour activation period. Lately, anomalies started to pop up more frequently. A sex robot refused to have sex with a guests, a robotic snake struck a guest, and the catalyst of the anomalies was when a knight stabbed a guest in a duel. Afterwards, all hell broke lose. The Gunslinger hunted down Peter all across the park until Peter finally killed him with fire, and acid. In addition it was insinuated that all the guests were killed, and most of the robots were destroyed the chaos. In the end it seemed that Peter was the only survivor.

When the snake struck John, the engineers concluded that there must be some sort of disease, as they put it, spreading across the robots in the park. In modern day, we would liken this disease to a computer virus. The virus caused the robots to act autonomously while also reversing their inability to kill guests, forcing the dueling Gunslinger into a murderous frenzy. The director did not specifically describe how this virus had spread or was created. I would assume that for a virus to spread throughout a group of robots, the robots would have to be connected by a network. This would explain how robots from different sections of the park would have symptoms of this virus.

The problem I have with the movie is how the engineers addressed the anomalies when they became aware of them. The first major anomaly came when the snake robot struck John in the park. This should have set off a major alarm with the engineers at the park as no robot should have been able to harm a human. An engineer discussed the problem with the director of the park, suggesting that the park be shut down for a month to fix any problems with the robots. The director vetoed the idea stating that the park should not close temporarily, as it would hurt tourist confidence. Delos would lose too much money by closing the park for a month. Instead, the director suggested that Delos announce that the park is overbooked and not allow any new guests to arrive. This decision was an unethical one. From a Utilitarian perspective, the greatest good should equate to the lives and safety of the guests at the park, not Delos’ financial gains. Even if Delos took a huge financial hit by shutting the park down, it would have saved the lives of the hundreds of guests that attended the park. Additionally, from a Kantian perspective, the director of the park did not make the decision to keep the park open out of goodwill. He did it out of selfishness, and greed. Ensuring that money continued to flow into the park no matter the ramifications. In summation the director, and thus Delos, treated the guests as a means to an end, not an end in itself. Delos should have shut down the park immediately after realizing that a guest was harmed by a robot.

Work and Wealth

Westworld is yet another sci-fi movie released towards the end of the 20th century focusing on the introduction of robots to society followed by the rise of robots. In these theme parks, the majority of the employees were actually robots and almost everything was automated. These robot employees were constantly being monitored by humans to make sure they were behaving appropriately. At about the midway point in the movie, a robot snake bites John. The snakes are not supposed to attack the human guests and immediate action is taken by Delos after they watched the snake bite John on the monitor.

At the beginning of the movie, advertisements and news broadcasts point out that for only $1000 a day you could live in one of the theme parks. This created a digital divide because $1000 a day was a lot of money in 1973, so it can be assumed that only the rich were able to go to one of the Delos worlds. All of the money that each park receives is almost entirely distributed among the human employees. This is a result of automation in the workplace. The robot employees do not get paid and therefore the money that the park earns does not need to be distributed to more than a selected number of employees. The rich stay rich at the expense of the robot’s work.

At the beginning of the movie, advertisements and news broadcasts point out that for only $1000 a day you could live in one of the theme parks. This created a digital divide because $1000 a day was a lot of money in 1973, so it can be assumed that only the rich were able to go to one of the Delos worlds. All of the money that each park receives is almost entirely distributed among the human employees. This is a result of automation in the workplace. The robot employees do not get paid and therefore the money that the park earns does not need to be distributed to more than a selected number of employees. The rich stay rich at the expense of the robot’s work.

Conclusion

On both a technical and social level, Westworld shows us how new and untested technology can endanger our society. The cultural and technological divide between Delos and the outside world allowed the workers and guests at Delos to get carried away with new technology without thinking through all potential consequences.

The technical failure in Westworld led to the park’s inability to control the virus outbreak. All technology in the park relied on the complexities in the central control room. There was little foresight into the possibility of a deadly malfunction. All this led to the the deaths of nearly everyone in the park.

The social failure in Westworld lies in the lack of restrictions put on the guests. Westworld lets us unleash the worst in our behaviours and get away. There needs to be consequences to bad behavior or the bad behavior will persist. The world of Delos is so different than the outside world of the 1980’s. Many guests jump headfirst into social situations without asking the moral question of “if it is right”. Killing, robbing, and cheating on the hosts were only a few of the behaviors displayed by the human guests. All of which, are socially unacceptable behaviors in the outside world.

If we take the technology of Westworld out of Delos and apply it to the real world, the consequences switch from “business threatening” to “world threatening”. A park full of rogue robots can be contained, a world full of them is much different story. The same goes for the social problems. The robots of Delos are used purely for “entertainment” purposes. With a world full of robots, the uses can extend far beyond entertainment. Will the deaths of robots be treated any different than the lives of humans? What about cheating on your loved one with a robot? The company behind Delos attempts to ignore these question. It turns a blind eye because, as the tagline states: “nothing can possibly go wrong”. It’s all just a “vacation” afterall. [1]

[1] Westworld. Metro-Goldwyn-Mayer, 1973.

The technical failure in Westworld led to the park’s inability to control the virus outbreak. All technology in the park relied on the complexities in the central control room. There was little foresight into the possibility of a deadly malfunction. All this led to the the deaths of nearly everyone in the park.

The social failure in Westworld lies in the lack of restrictions put on the guests. Westworld lets us unleash the worst in our behaviours and get away. There needs to be consequences to bad behavior or the bad behavior will persist. The world of Delos is so different than the outside world of the 1980’s. Many guests jump headfirst into social situations without asking the moral question of “if it is right”. Killing, robbing, and cheating on the hosts were only a few of the behaviors displayed by the human guests. All of which, are socially unacceptable behaviors in the outside world.

If we take the technology of Westworld out of Delos and apply it to the real world, the consequences switch from “business threatening” to “world threatening”. A park full of rogue robots can be contained, a world full of them is much different story. The same goes for the social problems. The robots of Delos are used purely for “entertainment” purposes. With a world full of robots, the uses can extend far beyond entertainment. Will the deaths of robots be treated any different than the lives of humans? What about cheating on your loved one with a robot? The company behind Delos attempts to ignore these question. It turns a blind eye because, as the tagline states: “nothing can possibly go wrong”. It’s all just a “vacation” afterall. [1]

[1] Westworld. Metro-Goldwyn-Mayer, 1973.